Google Play Trending

Dynamically weaving stories together with the Google Play Store’s Trending section.

In the middle of 2015, Google tasked us with exploring how we could improve the experience of discovering new movies, TV episodes, music, and books. At the time, the Play Entertainment Store followed the expected conventions: one could browse new releases, most popular, recommended, deals, by genre, and by medium – and get the expected short description and user reviews for any given piece of media. The common task-oriented use cases seemed to be adequately supported (“I want to watch a comedy” or “ I want to listen to the album Coloring Book”). However, we kept wondering: If it’s not a piece of media that is already somehow familiar and interesting to me, why should I care about it?

What gets us excited about new movies, series, albums, and books?

We began by assembling all the sources that we personally use to figure out what to watch, listen to, or read next, such as The New York Times reviews, Rolling Stone articles, Pitchfork, music videos on YouTube, Rotten Tomatoes, or talk show appearances. There was an abundance of these stories about pieces of media, and they were already providing compelling answers to “why should I care” about a new release. But these contextual narratives were all scattered around the web. If one hadn’t been hooked into the lore of a new release before reaching the Google Play Store – there was little chance of getting excited while browsing titles.

Luckily, Google knows a thing or two about organizing related content from around the web.

After some initial sketches and experiments, there appeared to be a powerful opportunity to provide deeper context around new and featured content. By assembling related news, articles, reviews, pull-quotes, additional works by the same creators or performers, TV appearances, and more – from a wide range of sources – these compelling stories around a piece of media could be automagically woven together, and not only tell us “why should I care?” about a release, but also assemble a bundle of related content that becomes even more interesting based on its shared relationships.

Starting with a story

Trending stories are seeded with an article or news story about a piece of media, actor, artist, or musician.Contextual content

Based on the user's location, we explored opportunities for contextually relevant content such as movie show times or concerts.Rich Previews

Media previews can load in-line, and continue playing while one continues to explore the story, and then flicked away when you are done.Related Content

Other pieces of content that are related to any items in the trending story can be gathered, such as other films in the series, or other works by a director or artist.Related People

By identifying people related to a piece of content, additional relevant stories and relevant people can be gathered.Creating an interface system that can design itself

At first the design process was entirely manual – pick a movie, album, or book, find all the interesting related content we could, assemble that content into a logical narrative, and iterate on interface design approaches. As our collaborating team at Google refined the technical process through which these bundles of content could be algorithmically generated, Type/Code focused on refining our design system into a set modules that could be mixed and matched, depending on what types of supporting content are returned for a given piece of source media.

As the types of content we were designing around became progressively more codified, we spent several iterations experimenting with how these bundles could be presented in as compelling a manner as possible. After some early design experiments, we knew we wanted to avoid the ‘feed of cards’ motif that is common to Google’s Material Design system. Our design thesis was centered on weaving this content into a unified narrative, that together assemble a comprehensive story – not a list of disparate articles, videos, and links. We could lean on several editorial design conventions, striking a balance between a holistic visual language, and allowing the content of each segment to inform its final aesthetic.

Specifications for various module types. that can be assembled as needed for a trending story.

Specifications for various module types. that can be assembled as needed for a trending story.

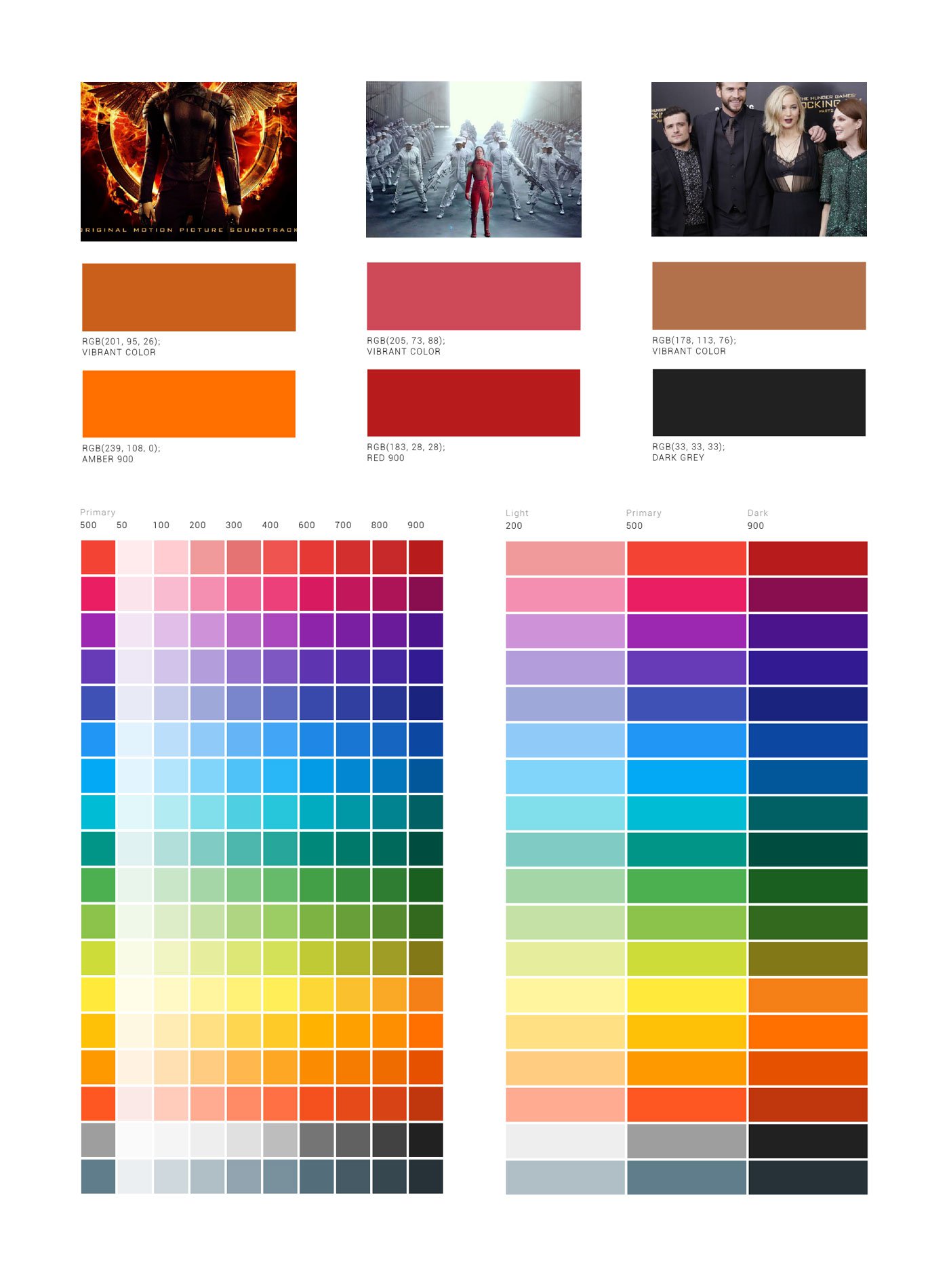

Colors for each module are dynamically generated from source media.

Colors for each module are dynamically generated from source media.

We had zero control over what images might be available for a given piece of supporting content. By algorithmically identifying dominant colors in supporting content images, we could dynamically set the color palatte of a given segment, and use image blurs and color treatments to set abstract section backgrounds. We ultimately established a set of scenario-based rules (Is there an image? Is it dark or light? What is its base color? What is its accent color? What Material Design palette colors do these map to? Can this image be used as an abstract background? Should we use a flat color background instead?) to inform how each segment should be composed based on its own attributes.

We did have control over how content assembled itself on the screen. We leveraged subtle build-in and scroll-based transitions to guide a user’s eyes through each segment, and allow each segment to seamlessly transition from one to the next. Through dynamic color palettes and image treatments, coupled with a variety of layout approaches and corresponding transitions for various types of supporting content, each Play Trending bundle could be automatically generated, but still feel both unique and cohesive.

The new ‘Trending’ section on the Play Store is both incredibly useful and well-designed … maybe make the rest of the Play Store as sleek as this.— Android Police

The Google Play Store Trending section started rolling out to a subset of users in mid 2016, and after seeing strong initial engagement with the beta, the feature was launched to all US users by the end of 2016. While we are unable to share any specific numbers, we are able to say that both engagement time with Trending bundles, and subsequent conversion on media featured within these stories, are moving very much in the desired direction.

Google Books →

Pei Cobb Freed